We wanted to try out ways of sensing motion during performances and using that to control lights and other aspects of the show. For a percussionist, the most important movements occur at their hand but as they are already holding sticks we needed a wearable solution for motion sensing

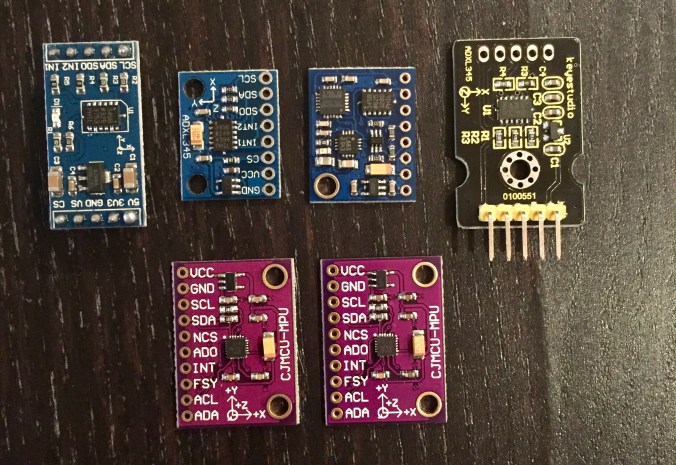

I bought a number of different motion sensors, often call IMUs (Inertial Measurement Units) from AliExpress. These little boards include a tiny sensor that would often be used in smartphones or game controllers, on a board just big enough to solder wires and use in prototyping.

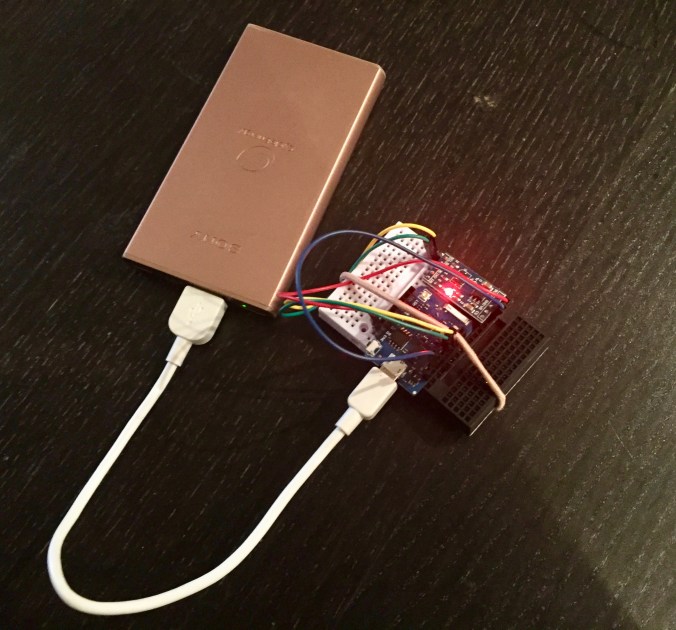

Some of these boards are more powerful, including accelerometers, gyroscopes and digital compass sensors, but this data is a bit more complex to process, so for our prototype performance we chose to use a simpler accelerometer board (ADXL345). I found SparkFun’s hookup guide and library really helpful in getting started with this chip and we soon had a hacked together wireless sensor to experiment with.

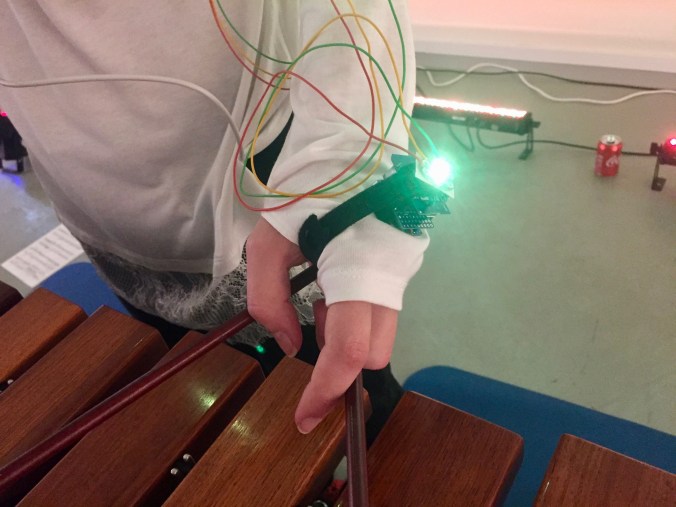

We tried out a few ways of mapping accelerometer data to lights in our development sessions. The most successful so far was to detect “strike” gestures, marked by a sharp increase in aggregated acceleration, and use that to turn on the costume lights. This way, the lighting on Christina’s costume was related to her percussive gestures.

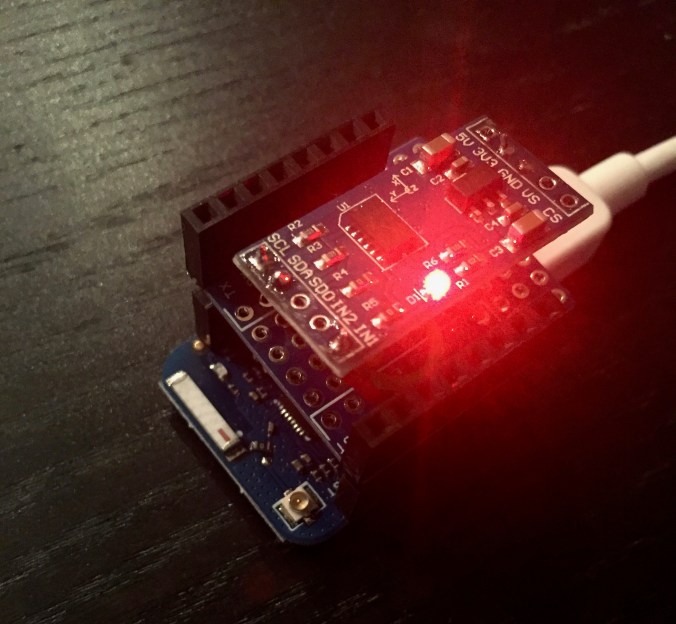

I soldered one of the ADXL sensors to a Wemos D1 Mini board to make a lighter and smaller sensor that we could use in performance:

In the prototype performance, we just velcro’d this to Christina’s hand and attached a small USB battery to her forearm for power. We could mount her hand LED right on top of the motion sensor. Simple, but worked!